Gigamon Insights

Note: Gigamon Insights is released as a Limited Availability (LA) feature, providing you with the opportunity to evaluate its capabilities before its General Availability (GA) release. For access to LA software please contact your Gigamon account team or file a support case and ensure you have reviewed the LA Terms and Conditions. You can file support cases with Gigamon support during the LA period and provide feedback to Gigamon on your experience with Gigamon Insights.

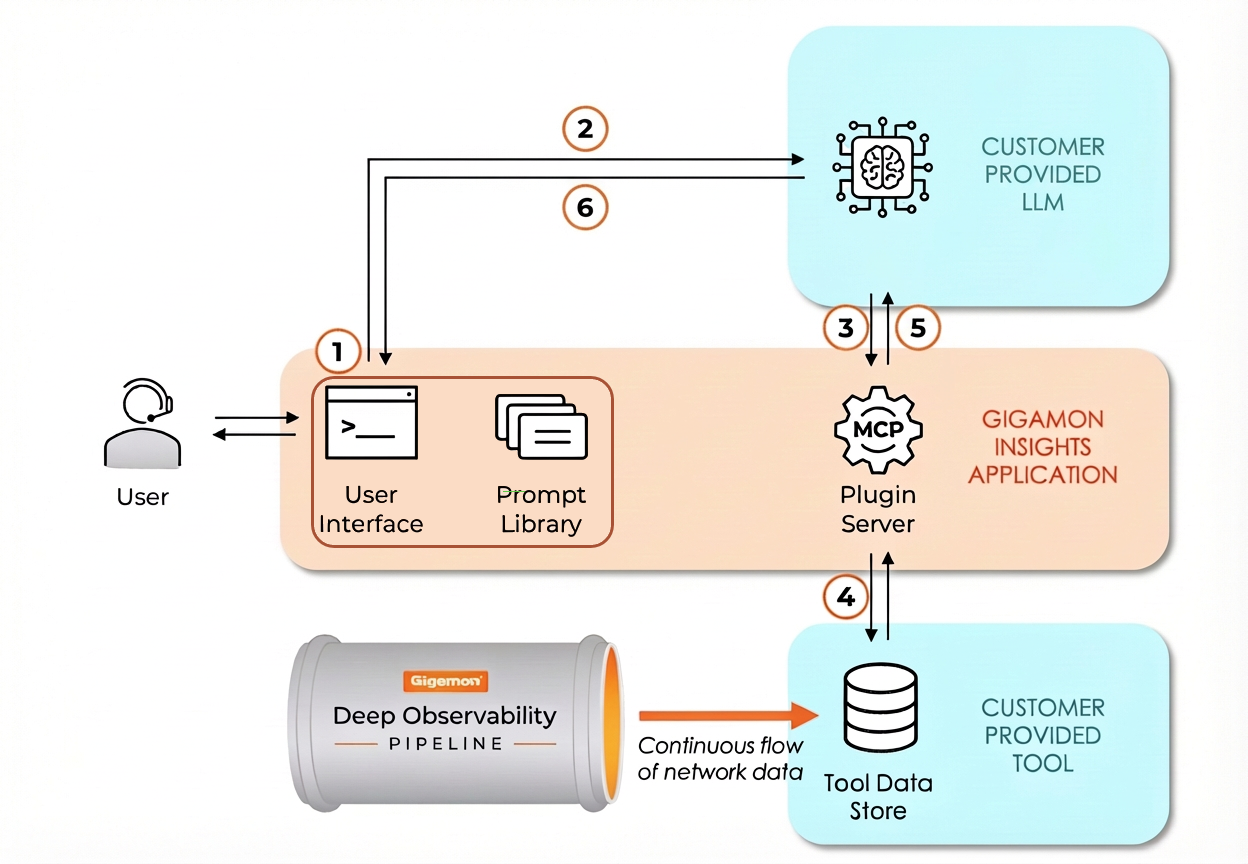

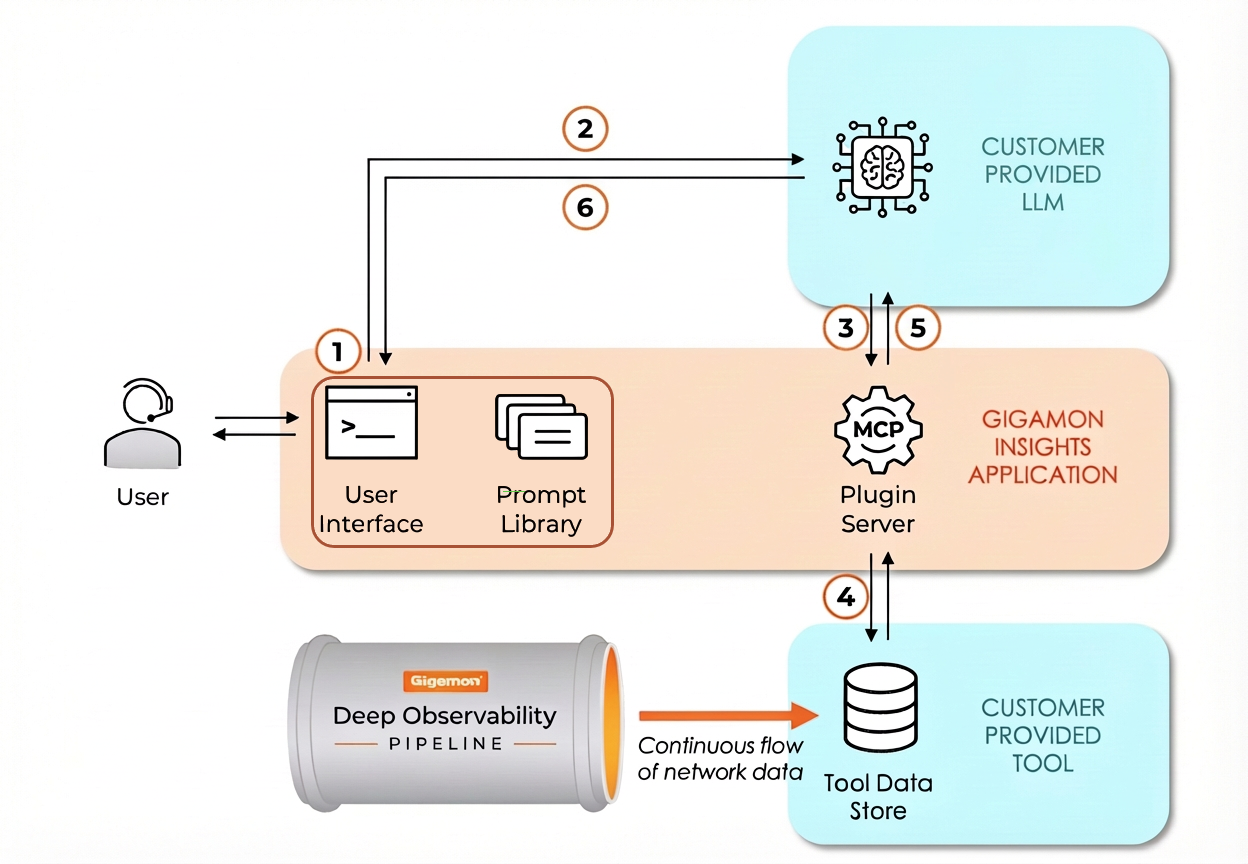

Gigamon Insights is a Generative and Agentic AI application that provides immediate, actionable answers to technical and business questions using trusted network packet data, enriched with additional context. Instead of relying on static dashboards and manual queries, you can ask questions in plain language and receive deep, context‑driven responses. It uses:

|

■

|

Gigamon Application Metadata Intelligence (AMI) and AMX metadata as its primary data source. |

|

■

|

A curated prompt library for Security, Network and Application Insights. |

|

■

|

Model Context Protocol (MCP) integrations with customer tools—Elastic and Splunk. |

|

■

|

Large Language Models (LLMs) (Anthropic Claude Sonnet and Google Gemini) configured through GigaVUE‑FM. |

What Gigamon Insights Can Do

|

■

|

Ask questions using natural language prompts: Ask questions in free‑form, conversational language or use predefined prompts that follow best practices and are available globally to help teams work consistently across the organization. |

|

■

|

Create and save your own prompts: Create custom prompts tailored to your specific tasks and save them for later use. Your saved prompts are private to you and are not available globally, so you can experiment and refine prompts without affecting shared content. |

|

■

|

Upload a dashboard screenshot for context: Upload one dashboard screenshot per prompt. The screenshot provides visual context that aligns with what you see in your monitoring tools, helping the system generate more relevant responses. |

|

■

|

Capture and export feedback: Provide feedback using thumbs‑up or thumbs‑down ratings and optional comments. Export responses as PDF or Word documents for sharing, record‑keeping, and audit purposes. |

How Gigamon Insights Works

|

1.

|

Initial Prompt: User submits prompt, either freeform or a pre-defined prompt from the library. Passed to LLM. |

|

2.

|

Prompt Expansion: LLM expands the human prompt with more context and generates multiple data queries behind the scenes. |

|

3.

|

Agentic Calls: data queries submitted via the MCP protocol. |

|

4.

|

Data Queries: MCP Server queries the data store for relevant data. |

|

5.

|

Iterative Retrieval: LLM processes the returned data and, optionally, repeats agentic calls multiple times to access additional data and refine insights. |

|

6.

|

Insight: LLM returns an insightful response. |